Twitter has a story to tell. Two weeks ago (ancient history by twitter standards I know) David Austin Walsh, a post doc of American history at Yale, sent off a tweet lamenting his potential future in academia as a historian. It went viral in part because Walsh has a sizable twitter following, but also because he blamed his misfortune, in part, on being a white male.

In response, prominent blogger,

of Noahpinion, wrote about the problem of elite overproduction: too many graduate students and an ever decreasing number of tenure track faculty positions. Smith tackles one finite aspect of the many problems in the academic job market and conclusion, that graduate student’s should probably have expectations that are more vague than the highly specific “get a tenure track position in this very narrow field of research,” is good advice, even if it won’t solve academia.What piqued my interest most, however, was one of the comments to Smith’s post. What would have been an innocuous response on twitter about his article if it hadn’t been from another prominent blogger,

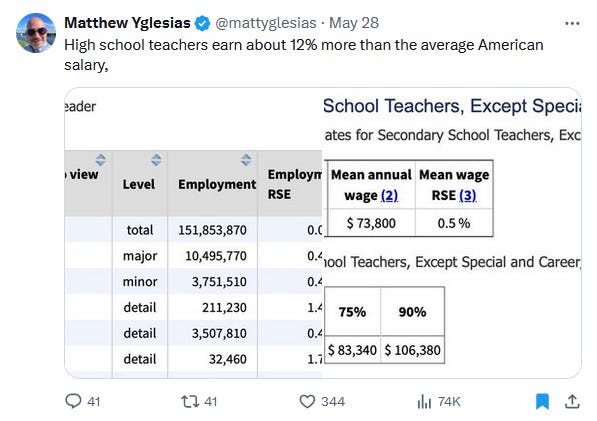

, read, “What really gets me about this particular dynamic is we are not oversupplied with K-12 teachers.” This must have tickled Yglasias’s fancy, because he made several more twitter posts with data (mainly about teacher salaries) to support his assertion that perhaps these history (and other) PhDs should become teachers.All this seems straightforward, until you ask the question, what did Yglesias actually prove with these salary stats? His assertion was that many of these overproduced PhDs could become K-12 teachers and his data is meant to support that assertion by demonstrating that they could still have a good salary if they went down that career path. But does it prove that?

As many pointed out, Yglesias’s comparison of median teacher salary with median American salary may not be the right comparison. How do teacher salaries stack up against median salaries for similarly credentialed positions? Or perhaps, since the demographic we’re talking about are all PhD holders, how do teacher salaries stack up against other professional PhD holders?

But even those questions assume that K-12 teaching is even an appropriate comparison to academia. On the face of it, the comparison makes sense: professors teach and teachers teach. The only difference is the age group right? Wrong. The culminating achievement of graduate school is writing a book length thesis of original research about a novel problem in the field. The point of grad school is to learn to research, not to teach. Moreover, many graduate students are funded through grants, and even those who do have to be teaching assistants for funding are mostly just grading or running labs. Training in teaching is in no way an integral part of nearly all graduate schools. Most graduate students are aspiring professors not because they want to teach, but because they want to do independent research. Many faculty view teaching as the price they have to pay to do their research in academia, or at best as a form of service. Hardly ever is it viewed as the point of the career.

Not only that, but many academics, quite frankly, suck at teaching. In what way does training to have a world class understanding in an astonishingly niche field prepare someone, or even qualify them, to teach high school American history? I have spent over a decade teaching in higher education, dedicating much more of my time and effort to improving pedagogically than most of my tenure track colleagues. I briefly considered just what Yglesias suggests: becoming a K-12 teacher rather than continuing to be paid peanuts as an adjunct. But there is a problem: sure, I may have written a book about democratic procedures that can help avoid errors in spaceflight development by improving future flexibility, but that wouldn’t give me any advantages teaching a 9th grade civics class.

I’ve also spoken to my numerous friends who are K-12 teachers, and I came to realize one thing: I don’t have sufficient teacher training for K-12. That may sound weird, given that I just noted above my decade of experience and my dedication to pedagogical improvement. Yet, student engagement strategies can vary dramatically by age group; what I do to keep my students engaged wouldn’t work in a K-12 setting. And even though I have engaged more substantially and thoughtfully in pedagogy and teaching methods than many of my tenured or tenure track peers, it only scratches the surface of the pedagogical training my teacher friends bring to bear every day. To be frank: academics aren’t qualified to teach K-12.

This is the fundamental flaw of Yglesias’s argument. Not that he was wrong to suggest that some academics might find success and fulfillment in a career as a K-12 teacher, some might, but that he thought he could prove it with quantitative data. Making such an argument requires a degree of nuance, values, and qualitative assessments that can never be captured by statistics, no matter how many he threw at the problem.

Too often intelligent and rigorous analysts like Yglesias fall to the seductive allure of quantitative assessment. It feels so convincing to just follow the numbers. Nor is Yglesias unique in this flaw. It crops up constantly in policy making about science and technology as well.

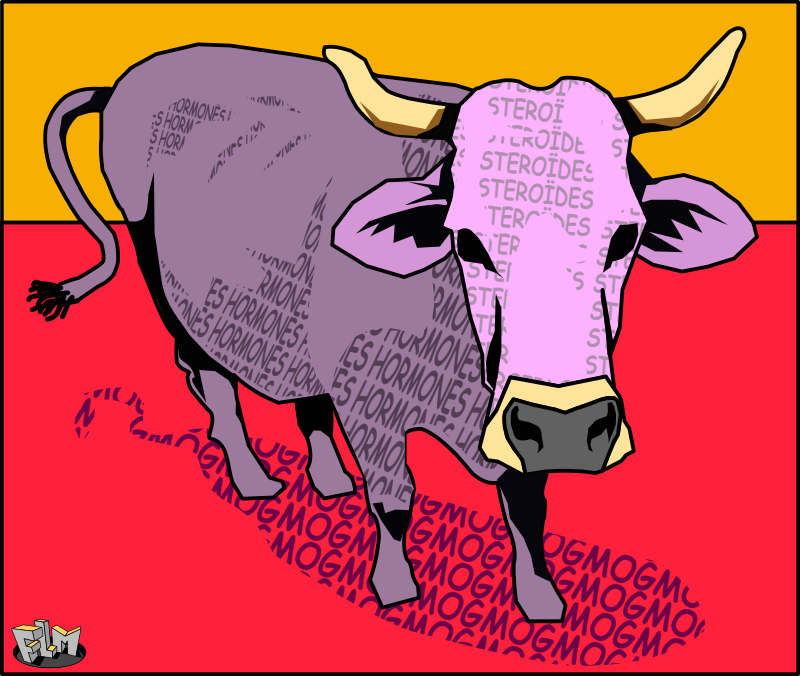

In 1984, scientists working on rBGH (recombinant bovine growth hormone) were achieving huge success. Four drug companies, Monsanto, Eli Lilly, Upjohn, and American Cyanamid, had patents on slightly different versions of the hormone, which could increase milk output in cows by up to 25%. They expected hundreds of millions of dollars in sales every year, and the FDA had just concluded that milk from cows treated with rBGH was safe for human consumption. It was on to field trials to figure out exactly how much yield would increase and the hormone’s effects on cattle.

But it didn’t take long for public opposition to appear. In 1986 a coalition of animal rights groups petitioned the FDA to require an environmental impact assessment. Farmers were already over producing milk and were slaughtering cows in order to reduce production. Added to that, a study out of Cornell University estimated that introducing rBGH would decrease the number of dairy farms in the US by 25-30% because it would disproportionately benefit larger dairy operations, and smaller ones would be gobbled up. The group thus claimed that rBGH would “damage the environment, cause unnecessary suffering to cows, and wreak havoc on the dairy community.”

When anti-biotech activist Jeremy Rifkin got involved, the issue went mainstream. Then the safety studies started coming out. A doctor named Samuel Epstein published a paper claiming that rBGH milk was higher in insulin-like growth factor 1 (IGF-1), which could cause breast cancer in women. But the FDA cited studies that the increase was only equivalent to drinking an extra glass of milk per day, and that IGF-1 is broken down and digested rather than absorbed into the blood. Then opponents pointed out that rBGH increased infections in the cows’ utters, which would increase anti-biotic use. So the FDA pointed out that the increase in infection required 5 times the rBGH than would be used, and that farmers were already prohibited from selling milk from cows that were on an anti-biotic treatment. Finally, Congress directed the National Institute of Health (NIH) to do a comprehensive health study. Surely that would put an end to spurious accusations!

But that isn’t what actually happened. In 1989, five different super market chains swore off using rBGH in their milk brand. In 1994, a few months after rBGH’s 1993 FDA approval, both Kroger an 7-Eleven announced that they wouldn’t sell *any* milk from rBGH treated cows. Then school boards started avoiding milk produced from rBGH cows. Then Main and Vermont both passed rBGH labeling laws!

It may be tempting to cite science denialism or misinformation as the culprit here. After all, all the facts, the scientific data, supported the idea that rBGH was safe. But this situation looks a lot like telling academics to be teachers. You can show as much data about teaching being a good job as you want, but academics just have different career motivations than teachers. If they wanted to be teachers, they would have been! In the same way, the safety data about rBGH didn’t really sway people because they just had different values and beliefs than the industry scientists. They cared about environmental impacts, small dairy farmers, and holding giant and opaque biotech companies accountable, not just safety. So when the FDA and the NIH trotted out safety data, it just kinda missed the point.

Nuclear power, too, suffered from the same problem. Taylor Dotson and I have written on this in more detail before. But the long and short of it is that trotting out a bunch of statistics about how, even with the Three Mile Island, Chernobyl, and Fukushima disasters, nuclear is still safer than fossil fuels just doesn’t address the root of people’s concerns. That kind of quantitative risk assessment doesn’t get at the feelings of dread people feel. Radiation is invisible and the danger is often not apparent until long after the deleterious health effects. Nuclear disasters are also often catastrophic, and catastrophic failures are just scarier to most people than the more drawn out health risks of fossil fuels. This is why, for instance, people tend to fear flying more than driving even though driving is massively more dangerous! This is why political scientist Edward Woodhouse has deemed nuclear power to be politically risky. Even if the scientific reality is that nuclear power is statistically safe, the dread that people feel means the *political* reality is that nuclear power *is* risky.

I expect some readers might be objecting to discarding quantitative assessments. But that's not what I’m arguing. Indeed, assigning numbers to the things we care to understand can make complexity easier to grasp. It enables straightforward comparisons between alternatives. It explicitly leaves open the possibility of being wrong (“the numbers don’t lie”) and thus the impetus to change one’s mind in the face of new information. All of these are important strengths. But those same strengths can also lead to underestimating complexity and an overly rosy outlook about how much control we have over complex systems. It also ignores those values about which people cannot be simply right or wrong, or which people are unwilling to alter. In an ideal world we would not give up quantitative assessment. But rather keep their shortcomings in mind, and know that they cannot offer complete answers on their own to the sorts of questions that we find most compelling and important.